DP-600 Exam Dumps - Implementing Analytics Solutions Using Microsoft Fabric

Searching for workable clues to ace the Microsoft DP-600 Exam? You’re on the right place! ExamCert has realistic, trusted and authentic exam prep tools to help you achieve your desired credential. ExamCert’s DP-600 PDF Study Guide, Testing Engine and Exam Dumps follow a reliable exam preparation strategy, providing you the most relevant and updated study material that is crafted in an easy to learn format of questions and answers. ExamCert’s study tools aim at simplifying all complex and confusing concepts of the exam and introduce you to the real exam scenario and practice it with the help of its testing engine and real exam dumps

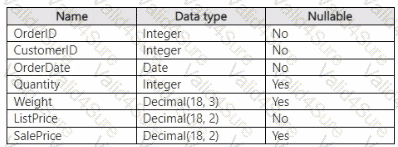

You have a Fabric warehouse that contains a table named Sales.Orders. Sales.Orders contains the following columns.

You need to write a T-SQL query that will return the following columns.

How should you complete the code? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

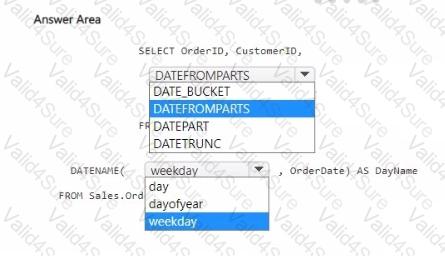

You have a Fabric tenant that contains a workspace named Workspace1. Workspace1 contains a lakehouse named I.H1 and a warehouse named DW1. I.H1 contains a table named signindata that is in the dho schema.

You need to create a stored procedure in DW1 that deduplicates the data in the signindata table.

How should you complete the T-SQL statement? To answer, select the appropriate options in the answer area.

NOTE: Fach correct selection is worth one point.

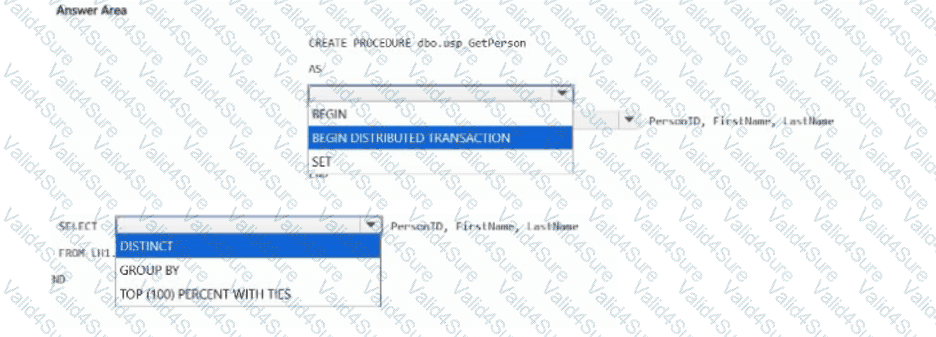

You have a KQL database that contains a table named Readings.

You need to query Readings and return the results shown in the following table.

How should you complete the query? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Which type of data store should you recommend in the AnalyticsPOC workspace?

You need to recommend a solution to prepare the tenant for the PoC.

Which two actions should you recommend performing from the Fabric Admin portal? Each correct answer presents part of the solution.

NOTE: Each correct answer is worth one point.